AUGMENTED REALITY + MIXED REALITY

FAA

Overview

Problem

Air traffic controllers do an essential job that doesn’t get a lot of attention. Though they no longer work on analog systems, their new digital systems are mostly a 1:1 recreation of technology that’s been used for decades. The FAA is looking forward to the next 20 years, and what the controller work station of the future could look like.

Solution

The FAA project focuses on researching current state and future state technologies to create an immersive and NextGen human in the loop system that fully supports air traffic controllers in their daily tasks.

After talking to SMEs and doing some initial task analyses, I determined that the main focus of this project should be increasing controllers' spatial situational awareness and reducing cognitive overload at all costs. Controllers are often inundated with auditory stimuli. By distributing these cues across other sensory modalities, we could soften the blow to their working memory during peak traffic times. This would be especially important as we would be adding additional stimuli by immersing their work station in an AR/MR environment.

Role

Design Lead for the XR prototype. This project is ongoing and in the early conceptual design phase.

Humanspace Concept

Air traffic controllers are already familiar with the concept of “airspaces.” I extended this metaphor to their individual workstations (“personal spaces”) and common meeting areas (“collaboration spaces”).

Personalspace

The Personal Bubble

While in their personalspaces, controllers would work inside a holographic bubble. This holographic bubble would change color and signal to their co-workers that they were either open to collaboration (blue) or busy and not wanting to be bothered (yellow). The color of the bubble would also synchronize with the state of their noise canceling earbuds (transparency vs noise-canceling mode). SMEs indicated that during peak traffic times, the control room can get very loud, so having noise canceling headphone to speak with pilots would be a huge benefit.

The bubble would also act as a cueing mechanism. An important part of a controller's job is handing off aircraft to the next sector. If this hand-off is missed, there can be huge consequences. If an aircraft was exiting their airspace without a hand-off, the bubble could show a ripple or disturbance in the general area of where the aircraft is, along with a spatial audio and haptic wearable cue.

AR/MR UI Panels with Control Knob

Controllers are flipping between different programs constantly. To expand their workstation, I designed an augmented and mixed reality UI system.

(Note: As this project is forward looking, we have not limited ourselves to a specific piece of hardware. We have made the assumption that either AR headsets have gotten light weight enough to wear for extended periods of time with a natural field of view, or that projection mapping technology has advanced enough that people no longer need to wear headsets to see holograms in their space.)

This system would replace their normal monitors with three physical panels. Holograms of their programs would be projected onto these panels. While they could have an unlimited number of programs open and viewable, only the UIs currently overlaid onto the physical panels would be opaque and fully visible. Everything else would be semi-transparent and out of focus. Keeping this information within reach but somewhat out of sight would help balance the cognitive demands on the user.

To navigate around the panels, the user could use a naturalistic pinch and drag gesture to rearrange their order. However, their primary means of navigation would be a physical control knob. It was important to me to keep as many components of the system tactile, as it would allow the controllers to interact with and manipulate their UIs without having to look at them (as opposed to a touch screen based system). We could also use predictive AI patterns to guide users to what we expect they should be looking at by increasing the tension or difficulty of moving the knob towards the incorrect screen.

3D Visualization of Airspace

Current controller radars are 2D. While this helps them understand how far apart aircraft are spaced horizontally, it leaves them in the dark on vertical spacing. Most of the time this works fine, but during peak traffic times, controllers indicated that it would be beneficial to see that spacing in 3D. In those cases, the controller could gesture to pull out their 2D radar and drop it in their bubble. Dropping the UI in their bubble would immediately turn it into a 3D view.

Early 3D visualization of a radar scope.

Early 3D visualization of aircraft. The highlighted section of the torus indicates direction of travel.

Natural Language Processing

A large portion of a controller’s job is giving verbal commands to pilots and then updating their system with those commands. At times of peak traffic, this gets overwhelming quickly. They often have multiple pilots speaking to them at once, with no ability to replay what was said.

By integrating natural language processing into their personal bubbles, we could achieve a few wins:

- Per coactivation theory, people understand and react to information they simultaneously hear and see faster than just receiving that information via one modality.

- If controllers were experiencing a moment of extreme overload during peak traffic, they could chose to simply read what pilots were saying them and communicate by speaking asynchronously, further balancing cognitive load.

- Pilots from international flights can sometimes be difficult for controllers to understand. By having a visual read out of their words, it would reduce the need for the controller to ask the pilot to repeat what they said.

Personal AI Assistant

A personal AI assistant could naturally tie in with natural language processing. Instead of looking for the latest weather, they could simply ask their assistant to pull it up for them.

By utilizing eye tracking, the assistant could also suggest or remind controllers to look at notification the system knows they haven’t looked at.

AR Remote Collaboration

While controllers often communicate with pilots, they communicate to other controllers nearly as often. Sometimes these controllers are in the same room, and sometimes they in a center far away. To better facilitate this communication, co-workers could remotely enter another’s personal bubble through remote collaboration.

A digital avatar of the co-worker would appear in the bubble when they call. This avatar could be customized to a) humanize the person on the call and b) promote user’s internal locus of control by giving them control over the inconsequential.

A huge pain point revealed by controllers was that sometimes the person you’re talking to just isn’t looking at the same information you are. To address this, the controller in their bubble could swipe over the digital avatar of their co-worker to reveal the UI their co-worker is currently looking at.

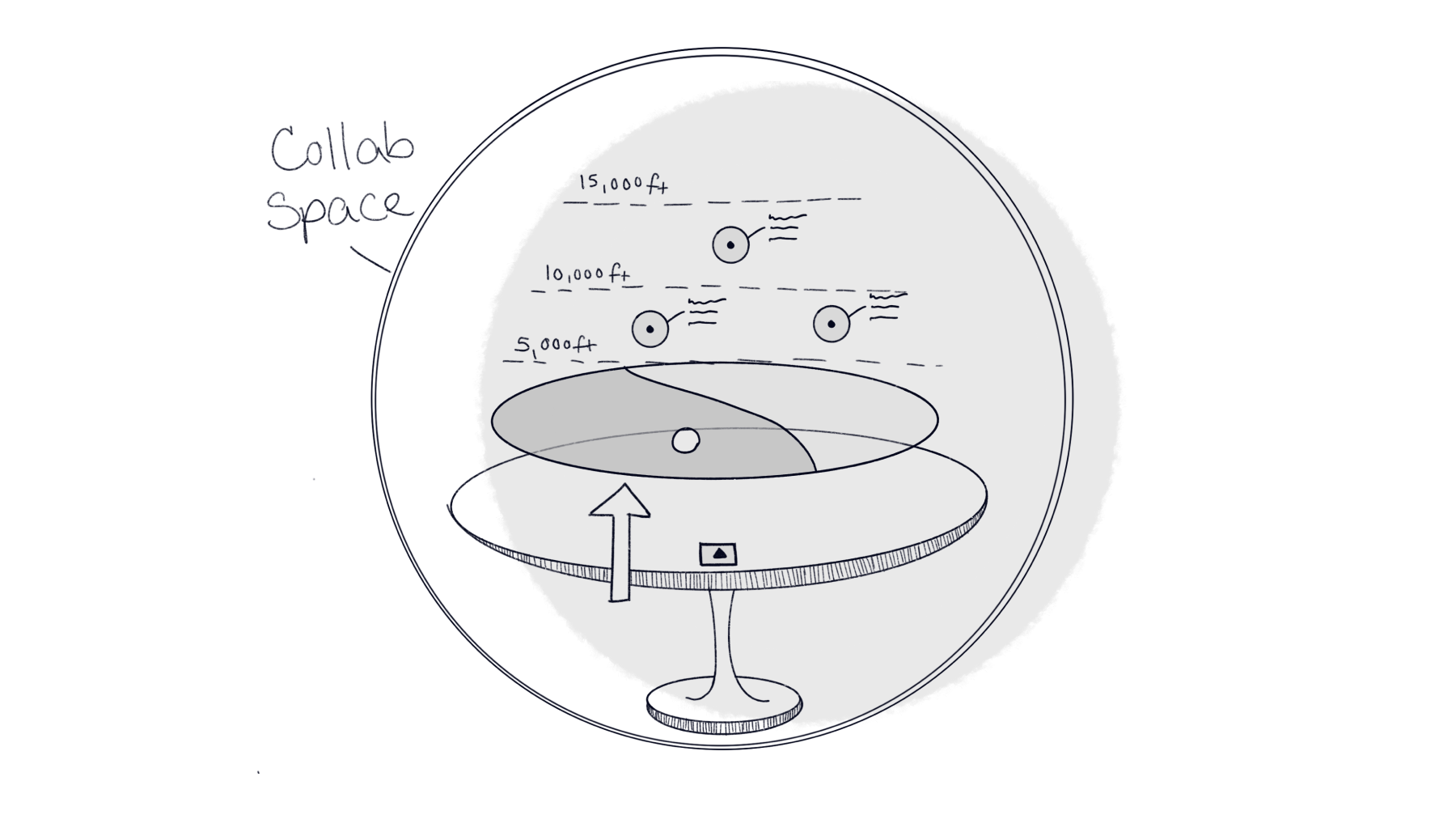

Collaborationspace

If there was an unexpected event that required the center to rally the troops, the controllers could leave their personalspace and enter a central collaborationspace.

The Collaboration Bubble

The collaboration bubble would provide a 3D, holistic view of the center’s airspace along with weather and traffic data. Co-located controllers could physically stand up and walk to the collaboration bubble, or remote in with their digital avatar. Controllers from different centers who also needed to collaborate could be present with their avatars.

AR Table & UI Lazy Susan

While gathered around a physical table, AR holograms would be overlaid, showing a 3D view of the airspace. UI elements and data filters would be hidden. A swipe up gesture would reveal additional UI elements centered around the table. This UI then could be swiped to spin to the desired information, much like a lazy susan at a dinner table.

Enhanced Human Concept

There was a lot of talk of new and innovative technology for this project, but I wanted to come up with a concept that focused more on the human and supporting their needs from a cognitive stand point. Air traffic control is, at its core, a vigilance task. Taking inspiration from Dune and cognitive research, I came up with this concept. By combining the following methodologies and redesigning control centers to have a more relaxing atmosphere, we could maximize the human performance of controllers.

Transcranial Direct Current Stimulation (tDCS)

tDCS is a neuromodulation technique that uses constant, low current, via electrodes on the head to increase human performance. Some initial research has shown an increase in target detection in simulated air traffic controller tasks. We could integrate these electrodes into a headworn display to increase controller detection rates and decrease vigilance decrement (reference).

Binaural Auditory Beats

Binaural auditory beats are an auditory illusion where two sounds with slightly different frequencies are played separately into each ear. Low frequency binaural beats have been shown to promote mental relaxation while high frequency beats are associated with attentional concentration (reference).

Aromatherapy

Several studies have shown the positive effects of certain smells on mood, attention, and vigilance. It’s also important to note that even though olfactory is usually forgotten about, it could play a huge role in cueing important information in my never ending quest to distribute cognitive load (reference).

Background Music

Background music has been shown to have a positive affect on concentration and attention. Research has shown that playing people’s preferred music is the crucial component in getting performance gains. The music the controllers play in the background as they work could tie in with their customization settings. However, it’s important to note that while listening to music is great for vigilance, it may negatively impact other cognitive processes and should be used with caution (reference).

End of Page